The Convergence of Heat and Sight: The New Standard for Edge AI Vision

- IntelliGienic

- Jan 13

- 3 min read

Updated: Mar 27

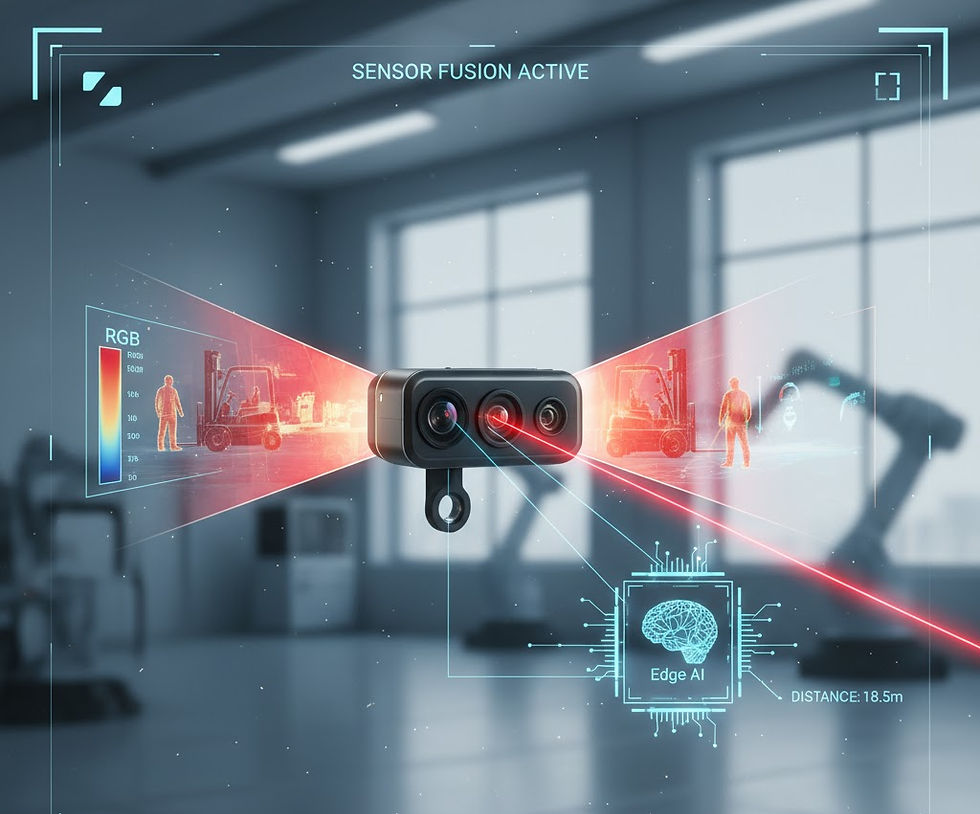

Integrating thermal sensors with visible light (RGB) cameras is no longer just a luxury for high-end military tech; in 2026, it is becoming the standard for any AI Vision project that requires "all-weather" perception.

However, as a creator or project manager, you’ll quickly find that merging these two worlds is an exercise in managing physics and high-level math. Here is a deeper look at why this integration is the future and the "polynomial" secret behind accurate measurement.

The Geometry Gap: Widescreen vs. Boxy

If you’ve ever tried to overlay a thermal image on an RGB stream, you’ve likely run into the "rim" problem. Most high-quality RGB sensors are born for the display world, featuring a rectangular, widescreen aspect ratio (typically 16:9). Conversely, the majority of thermal bolometer modules utilize a "boxier" aspect ratio (typically 4:3 or 5:4).

While thermal sensors aren't always perfectly square, they are designed with a much tighter ratio than RGB cameras to maximize efficiency. Because thermal lenses are made of specialized, costly infrared-transparent materials, a "squarish" sensor is the most economical way to utilize the lens's circular image area. This design ensures that the sensor captures the maximum amount of thermal energy from the lens without wasting expensive optical real estate on a widescreen format that the industry doesn't require.

Because of this geometric mismatch, you cannot simply perform a pixel-to-pixel overlay. To align them, engineers must decide which part of the RGB image to sacrifice or crop to match the thermal data. Interestingly, this "boxy" thermal format is a secret advantage for AI: most neural networks prefer square 1:1 inputs, meaning thermal data often requires less "padding" or distortion during processing than widescreen RGB.

Context vs. Precision: The Hybrid FOV Strategy

A major trend in 2026 is the use of different Focal Lengths (FL) to maximize the strengths of each sensor:

RGB for Context (Wide FOV): The visible light sensor provides the "big picture." In a medical clinic or a smart warehouse, this gives the AI the environmental context—identifying a person, a forklift, or a specific piece of machinery.

Thermal for Precision (Centered FOV): The thermal module often has a narrower field of view, focusing its processing power on the center.

In industrial automation, this allows the RGB to monitor an entire production line while the thermal sensor performs a "surgical" inspection of a specific part only when it flows into the center "active zone."

The Math of Heat: Polynomials and NUC

When you move from simple imaging to actual radiometric temperature measurement, the challenge shifts from optics to mathematics. To get professional-grade accuracy, you can’t just trust the raw "counts" from the sensor.

Modern systems allow users to set reference temperatures at specific spots on the screen to calibrate the device. Under the hood, the system is performing what engineers call Non-Uniformity Correction (NUC) or Radiometric Calibration.

The Secret Sauce: The system doesn't just apply a flat "offset" to the temperature. It uses Polynomial Regression to calculate a unique adjustment for every single pixel on the screen.

Because of lens physics (vignetting), the infrared radiation hitting the corners of the sensor is perceived differently than what hits the center. By calculating these high-order polynomials, the Edge AI ensures that a 38°C reading on the far edge of the screen is just as accurate as one in the dead center. This is what separates a "thermal toy" from a professional sensing tool.

Multi-Sensor Fusion: The New Standard for 2026

At the end of the day, multi-sensor fusion is the only way forward for advanced Edge AI Vision and robotics. A robot cannot navigate a smoky corridor or a pitch-black warehouse with RGB alone. By merging the contextual richness of RGB with the invisible data of Thermal, we are giving machines a sense of "touch" from a distance.

The merge has already started. Whether it's for medical diagnostics, industrial safety, or autonomous navigation, the dual-spectrum approach is the key to unlocking true environmental perception.

Let’s Build the Future Together

At IntelliGienic, we have been deeply immersed in the world of multi-sensor fusion, solving these exact challenges of alignment, polynomial calibration, and Edge AI integration. We believe that "Vision" is more than just what meets the eye—it's about the data you can't see.

Are you planning a project that requires advanced thermal-RGB integration or specialized perception? Whether you are a technical creator or a buyer looking for a robust solution, let’s discuss how we can help you bring your next creation to life.

Comments