Giving Machines Superpowers: The Fusion of RGB, Thermal, and Laser

- IntelliGienic

- Jun 24, 2025

- 3 min read

Updated: Dec 18, 2025

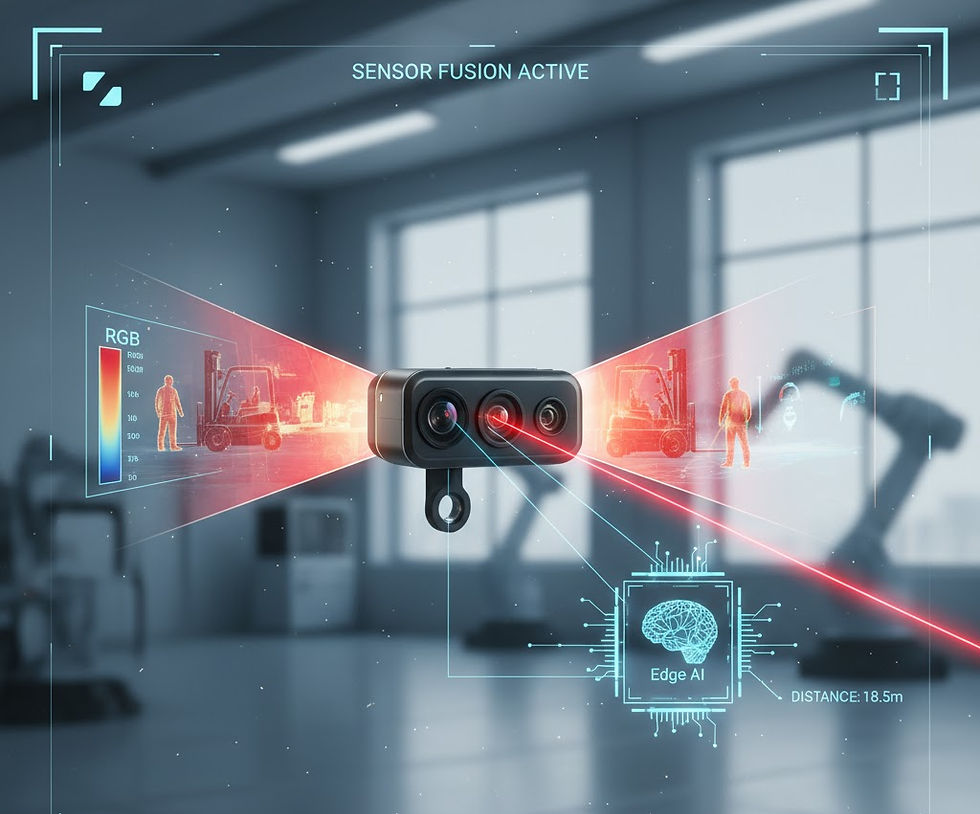

We’ve talked about the incredible detail a single laser rangefinder can provide, but what happens when you combine it with two different forms of vision and an Edge AI brain?

The answer is simple: You build a sensory system far superior to the human eye.

We are thrilled to share that our recent multi-sensor fusion prototype—combining RGB Camera, Thermal Camera, and Laser Rangefinder (LRF)—is confirming our belief: this integrated package is the pivotal component for tomorrow's autonomous systems, from smart cities to advanced robotics.

This isn't just bolting sensors together; it’s about creating a single, comprehensive understanding of the world. Here are a few fun, game-changing examples of what this fusion makes possible:

Vision That Never Fails: The All-Weather Guardian

Traditional smart cameras (RGB only) are great during a sunny day, but they completely fail in dense fog, heavy rain, or complete darkness. Our fusion prototype laughs at these conditions.

How it Works: The RGB camera provides high-resolution texture and color (the "what"). The Thermal camera ignores visual obscurity and provides heat signatures (the "where is it warm"). The LRF provides the exact distance (the "how far away").

The Superpower: An object recognition model can spot a lost hiker (via heat signature) in thick fog. Then, the LRF instantly confirms their exact GPS distance for a rescue team, while the RGB provides the visual identification when they get closer. This system doesn't rely on just light—it uses heat, distance, and light simultaneously.

Situational Awareness: The Smart Surveillance System

Current surveillance is reactive: an alarm goes off after a perimeter breach. Fusion makes it proactive and intelligent.

How it Works: An Edge AI model detects a human (using RGB for form recognition) walking near a sensitive fence. The LRF constantly measures the exact distance and speed of that person. The Thermal camera checks if the person is carrying anything unusually hot (a potential explosive or power source).

The Superpower: The system can define a "Threat Zone" with extreme precision. It's not just "object detected," it’s "Human detected at 45.3 meters, moving at 2.1 m/s, with an elevated heat signature." This allows security teams to prioritize and respond based on the actual risk profile, not just a blurry video alert.

Robotic Handling: The Perfect Grip

In manufacturing and logistics, robots need to pick up objects quickly, regardless of lighting or surface material.

How it Works: A robotic arm approaches a part on a conveyor belt. The LRF provides millimeter-accurate distance to the part's surface, ensuring the gripper stops at the precise moment. The Thermal camera confirms the part isn't dangerously hot or cold before handling. The RGB camera confirms color and orientation for quality control.

The Superpower: This fusion enables "material-agnostic" precision. A robot can handle shiny, dark, reflective, or dimly lit objects with the same speed and accuracy, eliminating the slowdowns caused by visual confusion.

Autonomous Vehicles: See the Danger, Know the Intent

Autonomous cars need to not just see obstacles, but understand their significance instantly.

How it Works: A static object is detected ahead.

LRF: Confirms the object is 100 meters away.

RGB: Identifies the object as a cardboard box.

Thermal: Detects a heat signature within the box.

The Superpower: The AI immediately processes: "It is a hot object (perhaps a small animal or an engine part) inside a negligible obstacle (box), located 100 meters away." Without thermal and LRF, it’s just a "box detected." Fusion allows the system to prioritize dangers with intention and context, leading to smarter, safer navigation decisions.

The future of autonomy is not about better individual sensors; it is about smarter integration. By fusing the strengths of RGB, Thermal, and Laser, we are building systems that don't just perceive the environment—they understand it, opening up a wealth of opportunities for tomorrow's Edge AI-driven world.

$50

Product Title

Product Details goes here with the simple product description and more information can be seen by clicking the see more button. Product Details goes here with the simple product description and more information can be seen by clicking the see more button

$50

Product Title

Product Details goes here with the simple product description and more information can be seen by clicking the see more button. Product Details goes here with the simple product description and more information can be seen by clicking the see more button.

$50

Product Title

Product Details goes here with the simple product description and more information can be seen by clicking the see more button. Product Details goes here with the simple product description and more information can be seen by clicking the see more button.

Comments