How Multi-Sensor Fusion Powers the Next Generation of Edge AI

- IntelliGienic

- Mar 9

- 5 min read

Updated: Apr 8

In the rapidly evolving landscape of Industrial IoT and autonomous systems, the definition of "Vision" is undergoing a fundamental shift. For years, the industry focused on increasing pixel counts and frame rates, operating under the assumption that more data equaled better sight. But as we move toward truly autonomous infrastructure inspection, sophisticated residential security, and "Physical AI," we are discovering that sight alone is insufficient.

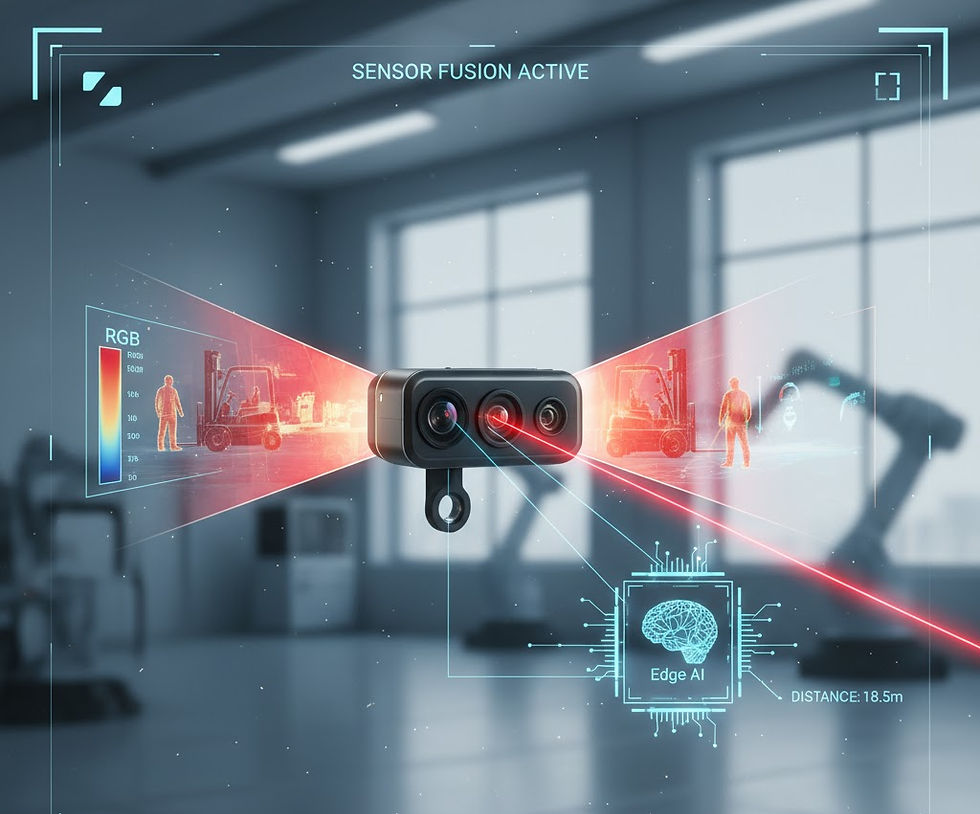

To build a system that can make critical decisions in real-time—without human intervention—AI needs more than a picture. It needs a multi-dimensional, multi-spectral understanding of its environment. At IntelliGienic, we believe the future of Edge AI isn't found in a better camera, but in the intelligent fusion of disparate sensing modalities.

The Evolution of Vision: From Pixels to Perception

Traditional Edge AI has largely been "Visible Light First." We train models to recognize patterns, objects, and anomalies based on the same spectrum the human eye perceives. While this has led to incredible breakthroughs in facial recognition and basic defect detection, it remains inherently brittle.

A visible-light system is a fair-weather friend. It fails in total darkness, it struggles with glare or heavy fog, and it is easily "fooled" by 2D representations. Most importantly, a standard camera lacks the ability to place an object in 3D space with mathematical certainty. It can tell you what an object is, but it struggles to tell you exactly where it is or what state it is in internally.

To bridge this gap, we must move from "Computer Vision" to "Sensor Perception." This means moving beyond the pixel and integrating sensors that can see what the human eye cannot, and measure what a camera can only estimate.

Seeing the Invisible: The IntelliGienic Tri-Sensor Framework

At IntelliGienic, we recently validated a high-performance fusion framework designed to provide a 360-degree "truth" of any given scene. By combining three distinct sensing modalities into a single, synchronized AI-ready stream, we allow the "Edge Brain" to perceive the world through three critical lenses:

Context (Visible): The High-Resolution Anchor

Visible light remains the gold standard for identification. Using high-resolution optical zoom (up to 8MP/10x), our framework captures the fine-grained structural detail needed for deep learning models to classify objects. Whether it’s reading a serial number on a remote transformer or identifying a specific type of corrosion on a bridge cable, the visible channel provides the "who" and the "what." In a smart home, this allows for precise facial recognition and package identification even at the far end of a driveway.

Condition (Thermal): The Internal Signature

Thermal imaging (LWIR) allows the system to see energy rather than just reflected light. In industrial settings, heat is often the first indicator of failure. A bearing about to seize, an electrical circuit overloading, or a fluid leak—these appear as "glowing" anomalies in the thermal spectrum long before they manifest as visible damage. By integrating a 640x512 thermal core, we ensure the system has 24/7 "all-weather" detection capabilities, penetrating darkness and light fog with ease. For residential applications, thermal sensors provide privacy-first monitoring—detecting a fall in a bathroom or a kitchen stove left on, without capturing high-resolution personal images.

Dimension (Laser): The Spatial Reality

The addition of Time-of-Flight (ToF) laser ranging is what transforms a "smart camera" into a Spatial Intelligence Node. Unlike passive optical sensors, this active sensing component emits a laser pulse and measures its nanosecond return trip to calculate a precise Z-axis. In smart doorbells, laser ranging acts as a virtual fence, accurately triggering alerts only when someone enters a specific 3D zone, eliminating false alarms from passing cars.

By synchronizing this distance data with the camera’s optical axis, the system adds precise XYZ coordinates to every detected object. In optimal conditions (considering target reflectivity and atmospheric clarity), this provides sub-centimeter accuracy. We move from simply saying "there is a crack on that wall" to providing actionable telemetry: "there is a 2mm crack located exactly 14.2 meters away at an elevation of 3.5 meters."

Why Multi-Spectral Fusion Matters for Your Next Creation

If you are an engineer or product lead designing the next generation of "Physical AI" hardware, the "Seams" between these sensors are where your project will either succeed or fail. Simply bolting three sensors to a bracket is not fusion; it is just a collection of parts.

24/7 Reliability and "Truth" Verification

Most AI models are trained on clean data. In the real world, shadows, rain, and night-time operations cause "inference dropouts." A fused system uses the thermal channel to verify what the optical channel sees. If the visible camera sees a "person" but the thermal camera sees no heat signature, the AI can intelligently flag a false positive (like a poster or a reflection). This level of "cross-verification" is essential for mission-critical autonomy.

Millimeter Precision in 3D Space

For drones, robotic arms, or autonomous inspection crawlers, "close enough" is not good enough. By fusing the laser rangefinder's boresight with the camera's optical axis, we can overlay measurement data directly onto the AI's "Region of Interest" (ROI). This allows the system to calculate horizontal distance and target height difference in real-time, providing the geometric context needed for navigation or precision maintenance.

The IntelliGienic Advantage: Optimized at the Edge

The true challenge of multi-sensor fusion isn't just selecting the hardware—it's managing the underlying data pipeline. When you integrate thermal, visible, and laser sensors, you are dealing with different native frame rates, varying latencies, and distinct data formats. How do you ensure the AI isn't making decisions based on "ghost" data from asynchronous sources?

Solving the Temporal Synchronization Challenge

Our engineering team focused on a Deterministic Hardware Heartbeat. Instead of relying on software-level alignment—which is prone to jitter under high CPU loads—we leverage hardware-level triggering and microsecond timestamping on our SoMs. This ensures that the "heat" from the thermal core and the "detail" from the RGB sensor are captured at the exact same physical moment, providing a synchronized truth for the AI's inference engine.

NPU Headroom for the Future

By utilizing the dedicated Neural Processing Units (NPUs) on our high-performance platforms, we’ve created a data "superhighway" that leaves plenty of headroom for advanced autonomous behaviors:

Cross-Modal Verification: Using the thermal anomaly detection to instantly trigger high-res optical zoom for target verification.

Spatial Telemetry: Fusing laser distance data with visual ROI (Region of Interest) to provide real-time XYZ coordinates for every detected object.

Predictive Diagnostics: Running edge-based models to track temperature trends over time, moving from reactive alerts to predictive maintenance.

Real-World Applications: From Infrastructure to Industry

Where does this multi-dimensional vision provide the most value?

Infrastructure Inspection: Imagine a system that can scan a high-voltage power line. It uses thermal to find a hot spot, optical zoom to inspect the physical insulator, and laser ranging to ensure the line isn't sagging too close to nearby vegetation.

Perimeter Security: A system that detects a human-sized heat signature 500 meters away in total darkness, automatically zooms in to confirm the identity, and provides security teams with the exact GPS coordinates of the intruder.

Search and Rescue: Fusing thermal "life signs" with high-res optical terrain data to find missing persons in rugged environments where human eyes would fail.

Smart Living & Residential Safety: A system that provides 24/7 guardian-level awareness. It uses thermal to monitor for house fires or elderly falls, optical zoom to verify family members, and laser ranging to create secure, touchless entry zones.

Let's Build the Future Together

At IntelliGienic, we don't just sell components; we provide the architectural foundation for the next generation of AI-enabled hardware. We’ve done the hard work of solving the synchronization, the multi-axial parallax calibration, and the data pipeline optimization so that you can focus on your core application.

The era of "simple" vision is over. The era of Autonomous Perception has begun. If your next project requires a system that understands distance, heat, and detail simultaneously, our experience in multi-sensor fusion is your shortcut to market.

Are you ready to give your AI the vision it deserves?

Contact our engineering team today to discuss how our Multi-Spectral Fusion framework can be integrated into your next Edge AI creation.

Comments